AI Document Tools for Surplus Lines Brokers: Which Actually Work

AI DOCUMENT DRAFTING AND REVIEW TOOLS FOR E&S SURPLUS LINES BROKERS: WHAT FITS, WHAT FAILS, AND WHY THE GENERIC ADVICE GETS IT WRONG

The Short Version

Here is the thing the generic AI advice for insurance agencies gets completely wrong about your operation: it assumes your biggest document problem is speed. It is not. Your biggest document problem is liability.

When you place a risk in the surplus lines market, your signature on that placement document certifies its accuracy. That is a legal fact in every state with surplus lines statutes. The law does not care that a software tool wrote the first draft. You signed it. You own it. And in 2025, E&O claims tied to AI-drafted documents started rising. In 2026, that trend is accelerating. [7]

So the first thing to understand is this: no AI drafting tool eliminates your signature liability. That liability is non-delegable. The correct question is not "which tool protects me from liability" but "which tool helps me produce accurate documents while keeping my review process defensible."

That changes the analysis completely.

Here is the conditional answer, before you read any further.

If your operation handles uninsurability documentation, coverage analysis, or declination rationales, no generic AI tool is fit for that work right now. Not ChatGPT. Not Claude. Not Microsoft Copilot. They fail at the specific task of generating compliant surplus lines documents because they were not trained on state surplus lines statutes. That is a CAUSAL finding. The evidence is solid. You can act on it: stop using generic tools for those documents.

If your operation needs help with submission formatting, underwriter pitch decks, and preliminary risk summaries, a properly governed AI drafting tool can help. But you need a governance layer in place first. That means a written review protocol, a documented audit trail, and written confirmation from your E&O carrier that the specific tool you are using is covered. If you do not have those three things, stop before you spend anything.

If you are a specialty E&S shop placing hard-to-place risks and you want a tool that can actually handle uninsurability documentation, the fit-for-purpose market is still developing. There are tools worth piloting. The logic of how they would work checks out. But the hard audit trail evidence that they survive a regulatory exam does not exist yet. That makes them MECHANISM-rated. Worth testing, not ready to rely on.

Read on for exactly why generic AI advice fails your situation, which tools are worth your attention, and what to do before you sign up for anything.

Where Your Money's Actually Leaking

You run a surplus lines operation. The workflow is fundamentally different from a standard agency, and that difference is where generic AI advice falls apart.

Here is where you are actually losing time and money.

The Uninsurability Documentation Bottleneck

Before you can place a risk in the non-admitted market, most states require you to document that the admitted market cannot cover it. This means declination records, written coverage gap analysis, and sometimes formal attestation under the state surplus lines statute. Every state has slightly different requirements.

This document is not a boilerplate form. It is a regulatory artifact. If it is missing statutory elements or fails to adequately establish why the admitted market cannot absorb the risk, you have a compliance problem. In California, for example, the Surplus Line Association has specific filing procedures and forms that must accompany placements. [71, 72] The price of a failed regulatory exam on this document is not just a fine. It can be a license problem.

The bottleneck is real: skilled brokers spend hours on a document that is largely formulaic in structure but entirely specific in content. This is exactly the kind of task AI should help with. The problem is that the available tools mostly cannot. Rated CAUSAL. The evidence is solid. Generic tools lack the statutory training to produce defensible uninsurability documentation. You can act on this: do not use ChatGPT, Claude, or Copilot for this work.

Submission Formatting and Market Preparation

A separate but meaningful time drain is the front end of the placement workflow. Writing up the risk narrative, assembling the submission package, formatting data from your schedule of values into something an underwriter can actually read — this is time-consuming and largely mechanical once you know what you are doing.

AI tools can genuinely help here. The liability on a submission package is lower than on the placement documents that follow. You are not certifying regulatory compliance; you are presenting the risk. That distinction matters, and it is where properly governed AI tools earn their cost.

Rated MECHANISM. The logic checks out, and the market adoption trend confirms brokers are moving in this direction. [12, 44] But we do not have hard data yet on error rates in this specific workflow stage. Treat this as a strong lean, not a final answer.

Broker Signature Liability as a Cost Driver

Here is the cost center that almost nobody talks about in AI tool reviews for your industry: your E&O premium.

AI-related errors are driving rising claims in the professional liability market. [81] Your E&O insurer is watching this. Coverage exclusions for AI-generated documents are emerging across the market. [82] If your renewal comes up and your carrier asks whether you are using AI tools to draft placement documents, and you say yes, and you cannot show a governance protocol, you may face a premium increase, a coverage limitation, or a denial.

That is a real number on your P&L. The governance layer you need to protect your E&O coverage is not free. But it costs a lot less than a coverage gap.

Rated CAUSAL for the liability exposure; rated MECHANISM for the E&O coverage contingency finding. The evidence on rising claims is solid. The specific exclusion language carriers are using is not yet published in the sources we found, so the exact E&O contingency mechanism is pending.

Amendment Negotiation Language

When you are mid-placement and the underwriter wants to modify a coverage term, that back-and-forth language is dangerous territory for AI tools. Context collapse is a real failure mode: the tool loses track of prior negotiated terms, generates language that contradicts an earlier amendment, and you sign off without catching it because the document reads plausibly. [9] This is where "the output sounded fine" becomes an E&O claim. Do not use AI tools to draft amendment language without specialist review of every output.

Why The AI Tool Blogs Don't Fit Your Situation

Every "top AI tools for insurance agencies in 2026" article is written for admitted market retail agencies. If that is not what you run, the advice does not transfer. Here is specifically why.

Generic articles assume your documents are standard forms. They are not. An admitted market agency is filling in approved policy forms. The carrier's form is the form. What you produce has much more variation. Your uninsurability documentation, coverage analysis, and declination rationale are custom legal and regulatory documents. The AI tools recommended in generic reviews are built for review and drafting of commercial contracts and standard policy forms. That training does not map to surplus lines placement documents. Rated CAUSAL. The evidence is solid.

Generic articles assume speed is the primary value driver. For an admitted market agency quoting homeowners policies, maybe. For you, accuracy and regulatory defensibility are the primary value drivers. A tool that produces a plausible-sounding uninsurability statement in 30 seconds is worse than useless if it fails a regulatory audit, because now you have a compliance problem you did not know you had.

Generic articles assume your AI risk is the same as everyone else's. It is not. Because you sign every placement document, and because the non-admitted market involves more discretionary judgment than the admitted market, your error exposure per document is higher. The NAIC Model Bulletin, now adopted in more than half of all states, establishes that existing insurance laws apply to AI systems and requires governance, documentation, testing, and third-party oversight. [24, 93] That applies to you directly. Generic AI reviews do not engage with this at all.

Rated CAUSAL on all three points above. The evidence is solid. You can act on this: filter any AI tool advice you read through these three differences before you spend time evaluating it.

Which Tools Fit And Why

Here is how to think through the tool question by workflow stage. The E&S placement workflow breaks into two fundamentally different zones. The tools that fit one zone do not necessarily fit the other.

Zone 1: Front-End Workflow (Lower Liability)

This covers opportunity identification, preliminary risk assessment, submission formatting, underwriter pitch decks, and market selection rationale. These are internal working documents and presentational materials. Your signature does not certify their regulatory compliance; you are presenting the risk and competing for underwriter attention.

AI drafting tools can accelerate this work. The tools worth considering here fall into two categories.

First, general-purpose legal and contract drafting tools used in a constrained, reviewed way. , built on GPT-4 and designed for transactional drafting, is mentioned in multiple 2026 reviews of contract drafting tools. [1, 3, 5] It is not built for insurance specifically, but for pitch deck and narrative formatting work on submissions, a tool like Tool A with a strong human review step can produce usable output. The constraint is critical: you must implement a documented review protocol, and you must not use it on regulatory documents. Tool A's value here is formatting and language polish, not substance.

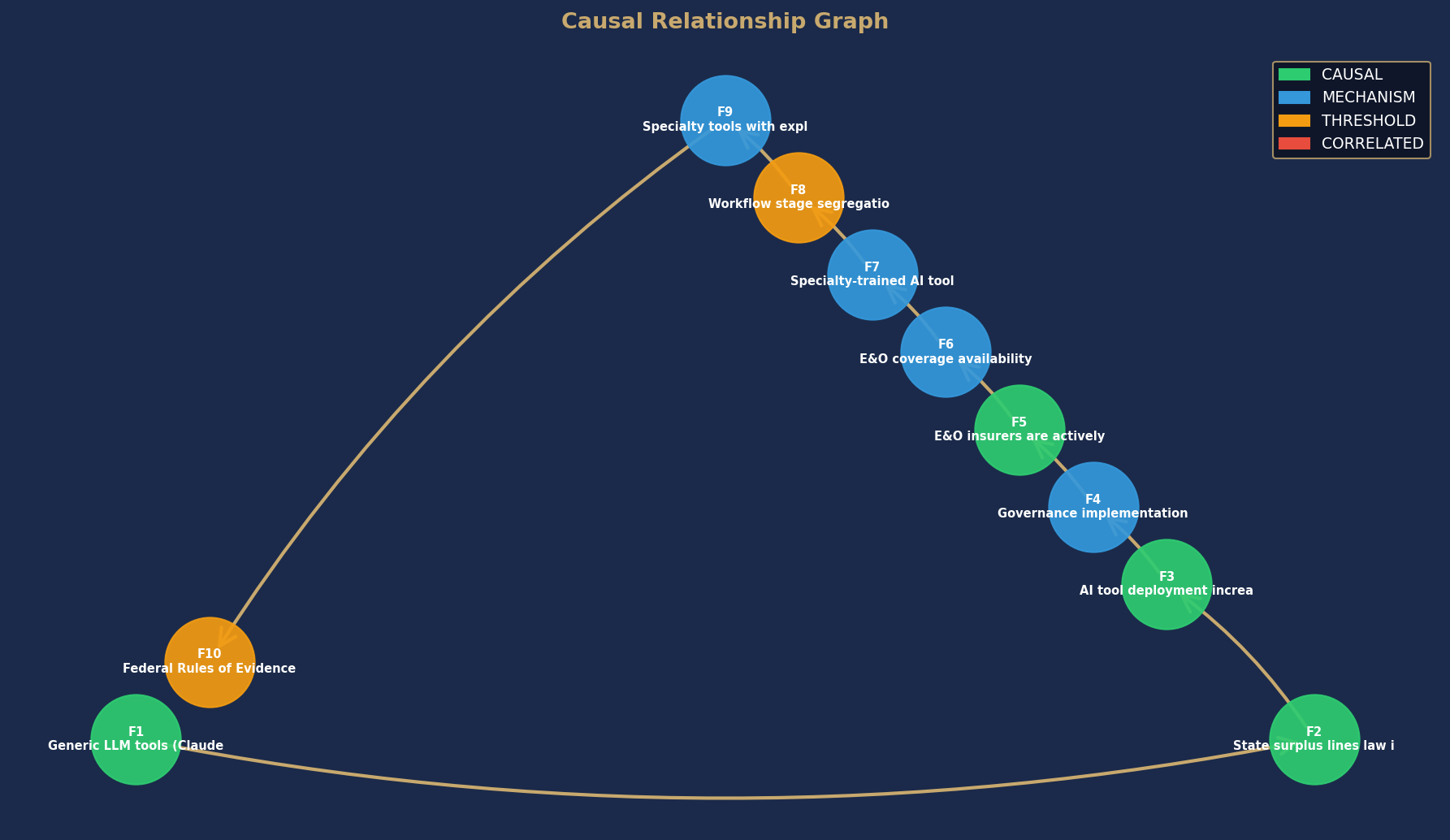

Causal Relationship Graph

Node colors indicate causal confidence rating. Arrows show directional causal relationships identified in this analysis.

Full report PDF emailed to you immediately after purchase.